Difference between revisions of "Projects:2018s1-122 NI Autonomous Robotics Competition"

| Line 74: | Line 74: | ||

#Calculate equations of the lines that run through the edges | #Calculate equations of the lines that run through the edges | ||

| − | The produced line equations | + | The produced line equations were converted to obstacle locations referenced to the robot. Path planning could make decisions based on locations, and the robot could avoid them just as it would avoid obstacles. |

Revision as of 18:23, 17 October 2018

Contents

Supervisors

Dr Hong Gunn Chew

Dr Braden Phillips

Honours Students

Alexey Havrilenko

Bradley Thompson

Joseph Lawrie

Michael Prendergast

Project Introduction

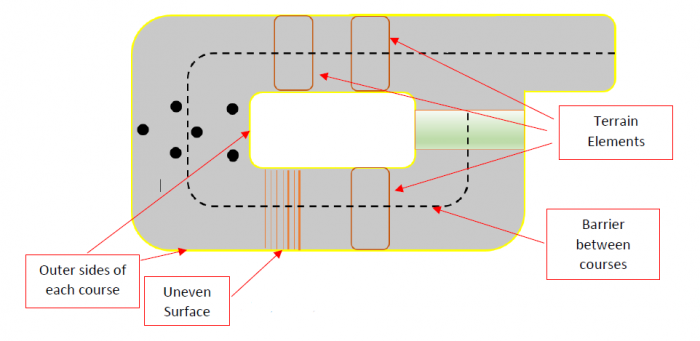

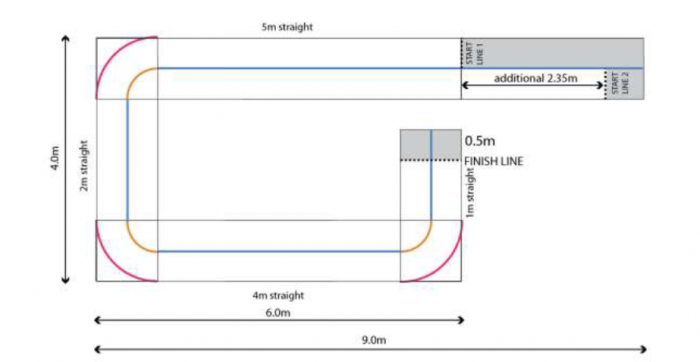

Each year, National Instruments (NI) sponsors a competition to showcase the robotics capabilities of students by building autonomous robots using one of their reconfigurable processor and FPGA products. In 2018, the competition focuses on the theme 'Fast Track to the Future' where robots must perform various tasks on a track that incorporates various hazardous terrain, and unforeseen obstacles to be avoided autonomously. This project investigated the use of the NI MyRIO-1900 platform to achieve autonomous localisation, path planning, environmental awareness, and autonomous decision making. The live final took place in September where university teams across Australia, New Zealand, and Asia competed against each other for the grand prize.

Processing Platform

MyRIO - 1900 containing a dual core ARM processor and Xilinx Zynq FPGA.

Programming Environment

MyRIO processor and FPGA were programmed using LabVIEW 2017, a graphical programming environment. Additional LabVIEW modules were used to process the received sensor information, such as the 'Vision Development Module' and 'Control Design and Simulation Module'.

Environment Sensors

The robot required a variety of sensors so that it could "see" track, obstacles, boundaries and the surrounding environment. Various sensors were required so the robot could calculate its position and the locations of surrounding elements.

The sensors used:

- Image sensor: Logitech C922 webcam

- Range sensors: ultrasonic & Lidar (VL53LOX time of flight sensors)

- Motor rotation sensors: motor encoders package

Image Sensor

A Logitech C922 was used for image or video acquisition.

RGB Image Processing

The team decided to use the colour images for the following purposes:

- Identify boundaries on the floor that are marked with 50mm and 75mm wide coloured tape

- Identify wall boundaries

Such processing will be taxing on the processor. Fortunately the myRIO contains an FPGA that could used.

The competition track boundaries were marked on the floor with 75mm wide yello tape. The RGB images were processed to extract useful information. Before any image processing was attempted on the myRIO, the pipeline was determined using Matlab and its image processing toolbox.

Determining Image Processing Pipeline in Matlab

An overview of the pipeline is as follows:

- Import/read captured RGB image

- Convert RGB (Red-Green-Blue) to HSV (Hue-Saturation-Value)

- The HSV representation of images allows us to easliy: isolate particular colours (Hue range), select colour intensity (Saturation range), and select brightness (Value range)

- Produce mask around desired colour

- Erode mask to reduce noise regions to nothing

- Dilate mask to return mask to original size

- Isolate edges of mask

- Calculate equations of the lines that run through the edges

The produced line equations were converted to obstacle locations referenced to the robot. Path planning could make decisions based on locations, and the robot could avoid them just as it would avoid obstacles.