Projects:2017s1-100 Face Recognition using 3D Data

Contents

Introduction

This project seeks to develop a system that is capable of recognising faces captured using commercial off-the-shelf devices. It will be able to capture depth imagery of faces and align them to a common facial pose, before using them to perform recognition. The project will involve elements of literature survey (both sensor hardware and algorithmic techniques), software development (in Matlab), data collection, and performance comparison with existing approaches.

Objectives

Develop a system that is capable of recognizing faces captured using commercial off-the-shelf devices such as the Xbox Kinect.

- Recovery of 3D data from polarimetric imagery

- Recovery of 3D data from Xbox Kinect and alignment to common pose

- Facial recognition from 3D models

Project Team

Jesse Willsmore

Orbille Piol

Michael Sadler

Supervisors

Dr Brian Ng

Dr David Booth (DST Group)

Sau-Yee Yiu (DST Group)

Philip Stephenson (DST Group)

3D data from Polarimetry

3D data from Xbox Kinect and Pre-processing

This section involves the creation of the software responsible for interfacing the newest Kinect camera (Kinect V2) hardware with MATLAB software on a computer. It included the canonical preprocessing techniques done from the 3D depth point cloud data resulting to a 3D facial image aligned to a frontal view pose for face recognition.

Method & Results

Kinect and Eurecom Database Interface

The acquisition of the image using the Kinect V2 was done first through connecting the Kinect camera with the MATLAB software. The subject was set up to have a distance no further than 1 metre away from the camera. The reasoning behind this is to allow the depth resolution to work around the 1.5mm depth resolution accuracy of a Kinect Camera. The image acquisition toolbox present in Matlab allows the user to acquire three sets of possible data, a 2D RGB image, a grayscale depth map or a RGB-D point cloud. This thesis worked with the RGB-D point cloud to be consistent with the data acquired from the available database. An added bonus is that Matlab has already aligned the coloured image with its subsequent grayscale point cloud and thus preventing any misalignment issues presented by the difference in camera locations. The previous implementation of the Kinect with Matlab involved the aligning of the RGB pixels with the grayscale point cloud. The figure below displays the result of the image acquisition step for the Kinect camera

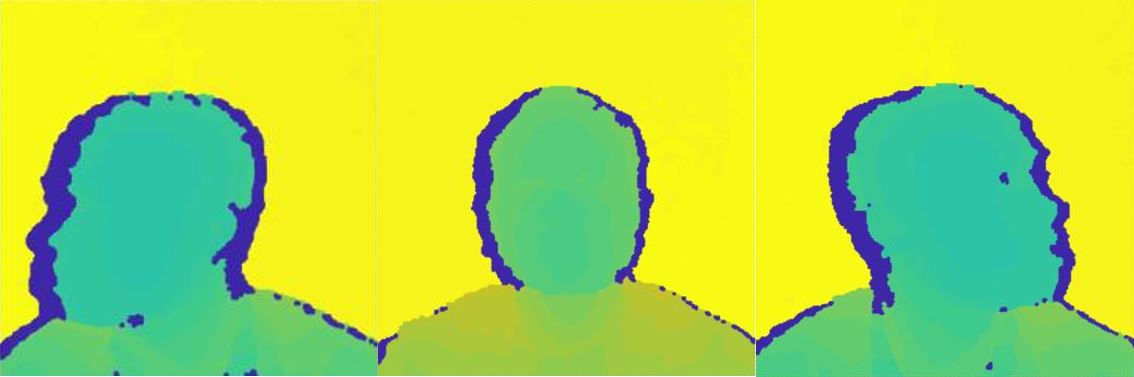

The Eurecom database was used as it already provides the array for the creation of point clouds with each subject and is the current database used for the facial recognition software. Another reason is due to the point cloud already been processed in a way that the background and any possible outliers from the front of the subject have been separated from the needed point cloud which in this case is the person being captured. The image below shows the three different poses the subjects mad which shows the initial processing done on the database already. The yellow and blue areas from the image of the point cloud represents the outliers, both the background and the frontal outliers. The main area of work is the green shaded area of the point cloud. The interface is currently working with images with no major occlusions, however eyeglasses are an exception. The reasoning behind this is to allow the nose-tip detection and face cropping stages described ahead with relative ease.

Facial recognition from 3D models

The proposed method for face recognition utilises sparse representation and is designed to be robust under occlusion and different facial expressions.

Method

A dictionary is built for each subject which is made up of that subject's training samples. These dictionaries can be utilised to identify a test sample by exploiting the assumption, that for each subject, these dictionaries will lie on a linear subspace in order to perform classification.